Snapshot of outcomes related to the HCPC’s education function for the 2018-19 academic year

The data set

The education annual data set includes data the following areas of our work:

- Approved programmes at academic year end

- Approval process

- Major change process

- Annual monitoring process

- Concerns process

All figures gathered for each section relate to work where we carried out an assessment of programmes in the 2018-19 academic year. This means we have adjusted all final outcomes to include those which were finalised in the following academic year (due to timing of the assessment carried out).

Most sources of data count assessments carried out on an individual programme basis (rather than at case level, which groups many programmes within the one

assessment).

Approved programmes at academic year end

Our overall rate of new programme generation increased to 6 per cent in this period, factoring in programme closures. This amounts to an overall increase of 12 per cent over the past three academic years.

Changes in approved programme numbers between years:

| 2014-15 | 2.6% |

| 2015-16 | 2.2% |

| 2016-17 | 0.9% |

| 2017-18 | 5.2% |

| 2018-19 | 6.1% |

Many factors drive programme generation, some of which depend on developments within specific professions and some of which cut across all professions. The majority of new programme generation is spread across a range of professions. Further analysis of new programme generation is included within the approval process section.

Key developments influencing this result in England include:

- Degree apprenticeships;

- Regulatory and policy changes, which have had a diversifying effect on higher education provision;

- The introduction of HEFCE funded-training models, which has incentivised new education providers; and

- Changes to the requirements for (and process around) awarding degrees, which have increased the number of private providers delivering qualifications at degree-level and above.

Key developments influencing this result in the UK include:

- The rise in paramedics' threshold qualification, triggering more degree level proposals;

- Efforts to address projected shortfalls in some professions, leading to greater numbers in training and stronger provision of training;

- Measures to commission training places, and the identification of new training routes, in vulnerable professions

- Changes to medicinal entitlements for some professions (prescribing rights

and medical exemptions)

Due to the diverse and interconnected nature of these factors, our engagement in supporting various initiatives and working groups across the four nations has been high. In future years, our relationships with other sector bodies will remain key. We intend to prioritise the following groups:

- Professional bodies

- Professional regulators

- The Council of Deans of Health

- Health Education England

- NHS Education for Scotland

- Health Education and Improvement Wales

- Office for Students

Approval process

Reasons for visiting programmes

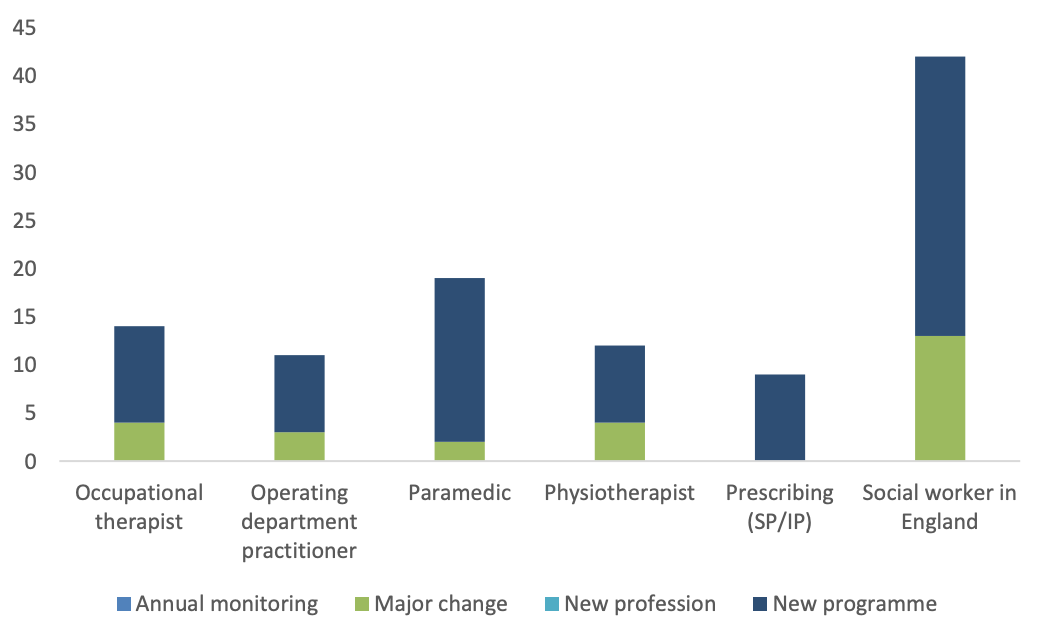

The top six professions and practice areas highlighted below reflect a broader trend of sector developments having impacts across a number of professions, leading to new programmes and significant changes to those already approved with us.

Most visited professions / practice areas, by reason for visit

A significant proportion of activity in this period continued to relate to programmes for Social workers in England. With the transition of the profession to Social Work England in December 2019, we have recognised the impact this will have in the short to medium term around activity and resources. On this basis, a reduction in the size of the Education function was completed in this financial year, and we are now positioned to operate effectively for the 15 remaining professions and post-registration areas of practice.

The impact of raising the threshold level of qualifications for paramedics* can be seen in the level of new degree programme activity and triggered visits from major change. As we move toward the September 2021 deadline for the profession being degree entry only, we expect this trend to continue. There are some key challenges for the profession, which feature commonly in our assessment of new proposals:

- The availability of suitably qualified staff – this will continue to be a challenge as the profession moves to degree, with a small base of suitably qualified academics within the paramedic profession to draw upon to support programme delivery.

- A suitable range of practice based learning – the shift to degree has with it, created the need and expectation to provide a wider range of learning opportunities, to that traditionally found in ambulance service settings. This could be in other acute hospital based settings, or more widely in multidisciplinary, GP, and community based settings.

- The workforce challenge – working across the four nations, there is a need to ensure a fallow year does not occur as we move closer to September 2021.

The extension of independent and supplementary prescribing rights to a wider range of allied health professions has also encouraged a growth in programmes being offered. The multidisciplinary nature of these programmes is becoming more diverse, with AHPs commonly training alongside nursing and pharmacist professionals. The move to recognising a single competency framework has simplified the landscape for providers, but further work is needed around fundamental questions regarding the recognition of prescribing qualifications across professional registers (e.g. nurses registering as paramedic who holds prescribing rights), and the quality assurance of the same programmes by multiple statutory regulators (HCPC, GPHC, NMC).

Degree apprenticeships continue to drive activity for new programme approval. To date, we have approved 40 providers of apprenticeships across 8 of our regulated professions. At the time of writing, we have 18 approval cases open which are assessing new apprenticeship routes. Based on these figures, we expect this area of work to continue across most professions for the foreseeable future.

Time taken to complete the approval process

The lengthening of the post visit process continues a trend seen in recent years whereby the number and complexity of conditions we place on approval has directly impacted on how long it takes for education providers to reach a final outcome. This is to be expected given the number of trends seen within sector.

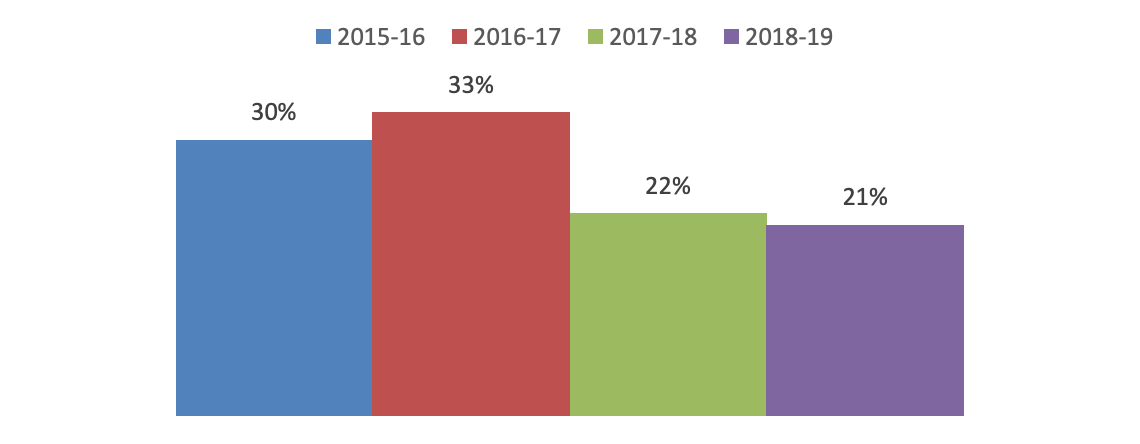

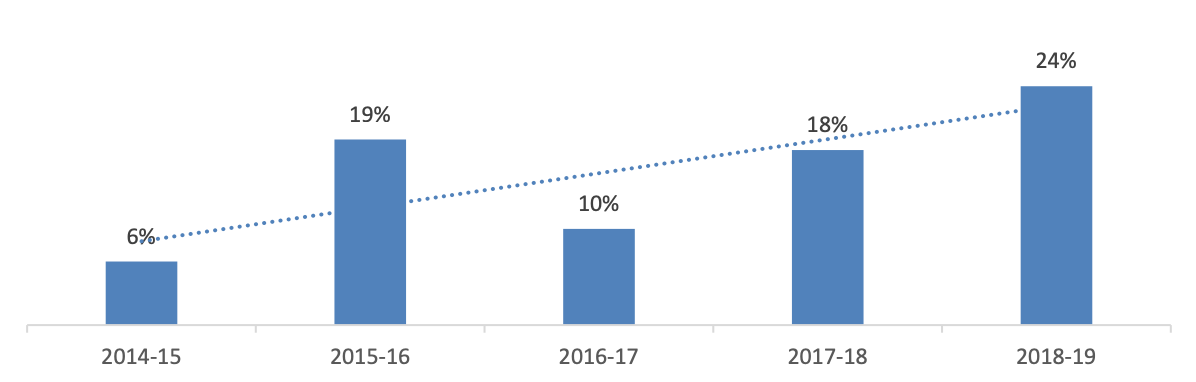

We aim to complete the post-visit process within three months of the visit concluding. This year, 21 per cent of programmes completed the process within this timeframe, which continues a consistent pattern of fewer outcomes being achieved within this timeframe over the last four years.

Visit to final outcome within 3 months

Factors influencing this outcome include:

- the time taken to produce and send the visitors’ report to the education provider;

- the length of time required by education providers to submit their first conditions response; and

- the need for a second response from the education provider to meet

conditions.

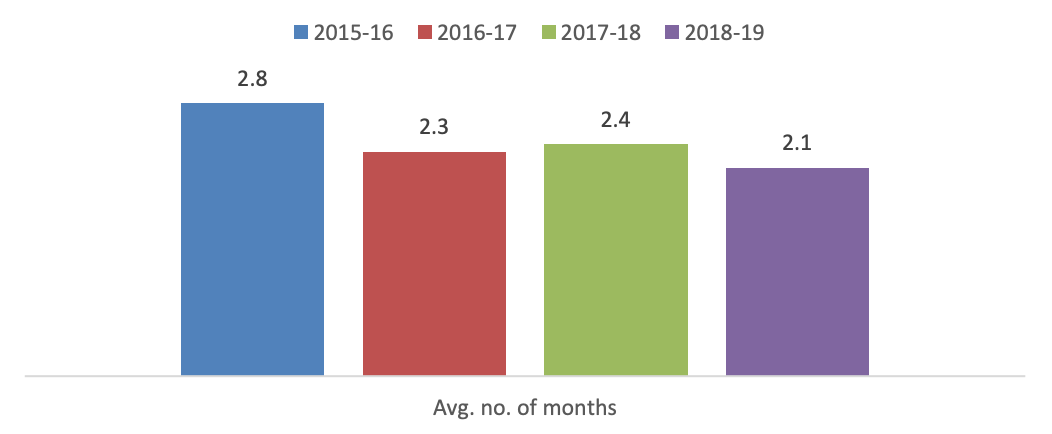

We continue to agree longer first conditions response deadlines, with education providers needing on average around 2.1 months to respond to provide their first response to any conditions we place on approval. We aim for this be set around 6 weeks after the visit, but negotiate this on a case by case basis, factoring in the nature and complexity of conditions being set.

Average time between visit date and conditions deadline

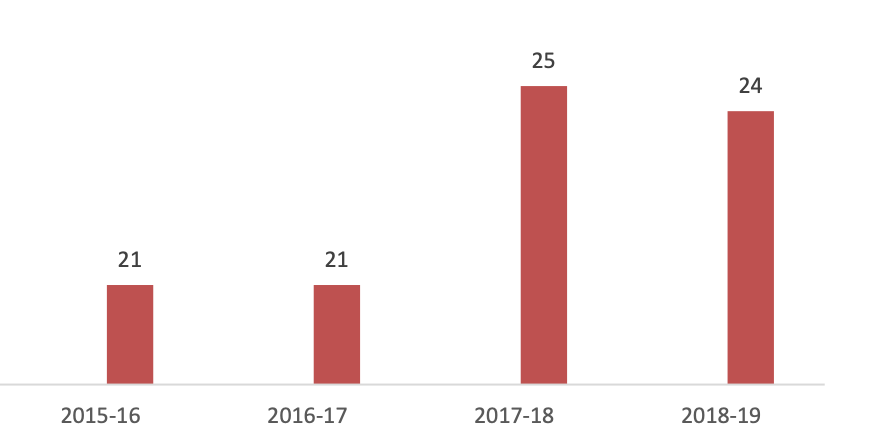

Despite this complexity, we have continued to produce visitors’ reports consistently within our one calendar month target, averaging 24 days to produce these.

Days taken for education provider to receive visitors’ report

Outcomes reached

There were a higher number of non-standard outcomes (the standard being programme approval) also reached this year. The tables below indicate that where this was the case, it commonly led to:

- providers withdrawing programmes from the approval process, or

- the Committee either agreeing with the visitors’ recommendation, or

deciding to make a different decision.

We expect the number of non-standard outcomes to continue in future years, given the complexities seen in the sector, all of which have implications for the quality of programmes and their ability to meet our standards.

Visitors' recommendation at conclusion of approval process

| Non-approval of new programme | 5 |

| Withdrawal of approval from a currently approved programme | 1 |

ETC decisions made at conclusion of approval process in this AY

| Non-approval of new programme | 1 |

| Withdrawal of approval from a currently approved programme | 0 |

We introduced a New Profession / Provider (NPP) pathway midway through this period, which aims to frontend quality issues and risks, to assist in minimising visit outcomes which lead to non-standard outcomes, or a high number of conditions on approval. Part of our work in the next financial year will be to review the early outcomes and impacts of this pathway, to understand whether it is assisting both education providers and visitors in managing the complexities of programme delivery through the approval process.

Cancelled visits

We continue to see a higher occurrence of cancelled visits since 2015-16. Whilst the majority of cancellations were before the visit (68%), almost a third were cancelled after the visitors report had been produced. Depending on when the cancellation takes place, we may incur more costs for partner fees, travel, accommodation, notwithstanding the employee costs associated with scheduling, and visitor panel and education provider support.

Percentage of visits cancelled

Major change process

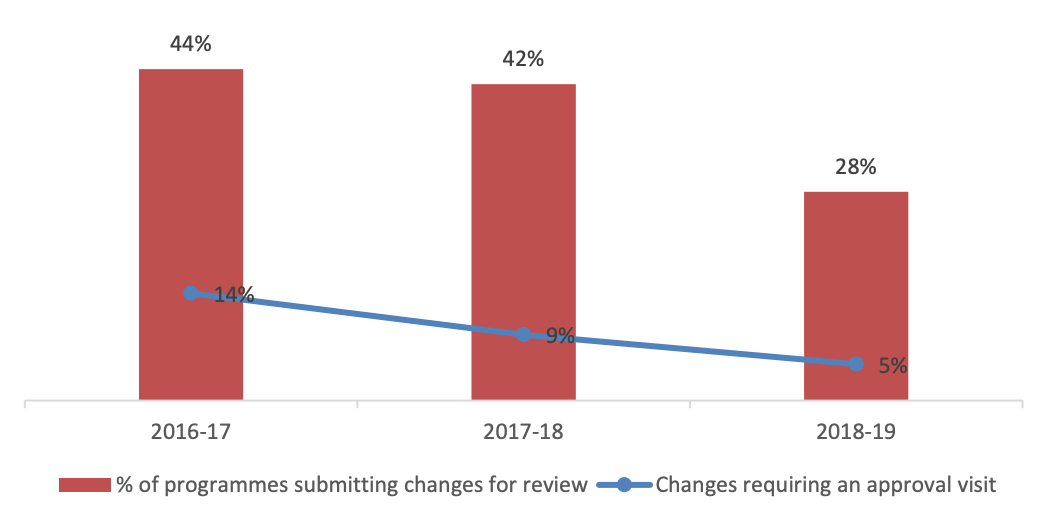

We continued to refer less major changes to our approval process for assessment. This is a useful indicator of the nature and extent of changes being made within the training routes for our professions.

Major changes we referred to the approval process

Our different approach to the assessment of degree apprenticeship programmes has enabled more changes to approved programmes to be considered via this process where it is proportionate to do so*. This has enabled us to be more proportionate in our decision making through this process, whilst allowing visitors to continue to scrutinise apprenticeship proposals effectively. We will conduct a review next financial year across our apprenticeship work spanning three academic years to focus in on how our approach has led to support the delivery of apprenticeships across our professions.

We referred 95 per cent of all other changes to our major change and annual monitoring processes. In this regard, our open-ended approval approach still seems to be providing a cost-effective way of focusing on the assessment of significant change in a proportionate way.

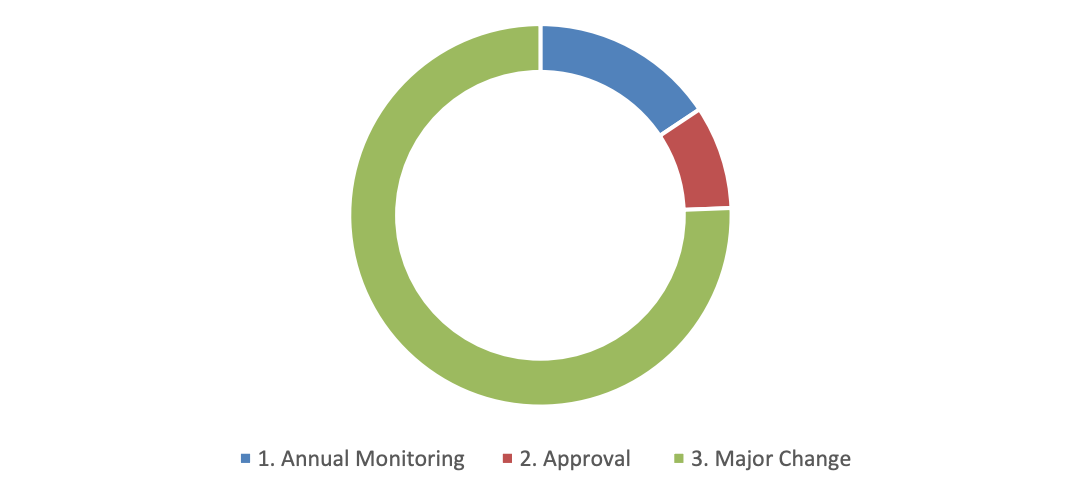

Executive recommendations made regarding change notifications

However, we processed a reduced number of notifications in this period, with around a 14 per cent decrease when compared to overall approved programme numbers. Whilst it is difficult to narrow down the factors influencing this result, social workers leaving during this period is a likely contributor, with providers perhaps waiting until the change in regulator to highlight significant change.

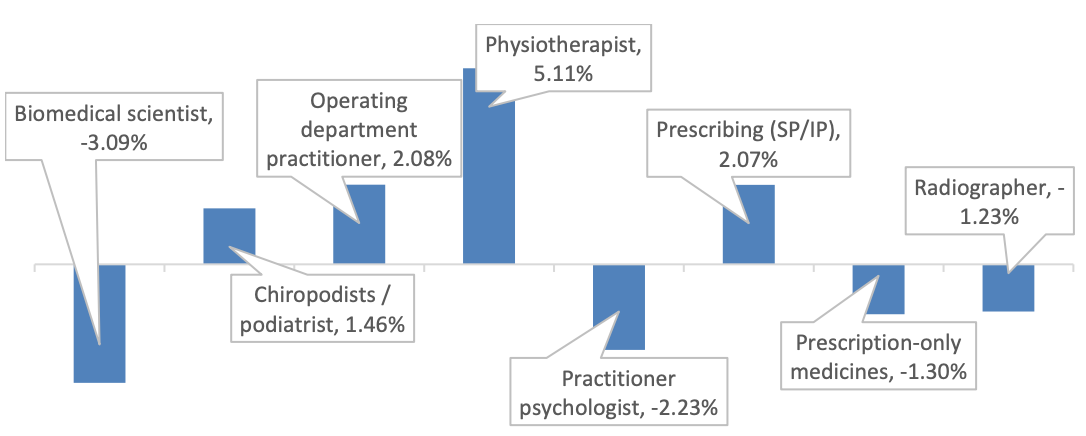

Top increase / decrease in notifications by profession

The graph above highlights the profession where we saw the most increases / decreases in change notifications compared cumulatively over the last three years. We have already discussed how trends such as apprenticeships, prescribing rights and workforce challenges have driven engagement through the approvals process. Broadly speaking, these themes can also be applied here.

Major change is only effective where the need to engage with it is well understood by providers. Based on these results, we plan to communicate further with the sector to increase this understanding, and to reinforce the importance of engagement alongside the benefits of openended approval and flexible, output focused standards

Weeks taken to complete notification and full major change process

| Process stage | 2018-19 | 5-yearly average | Target |

| Notification forms (referred to annual monitoring or approval process) | 2.4 | 2.0 | 2.0 |

| Complete the full major change process | 11.9 | 11.2 | 12.0 |

We exceeded our notification stage timescale for how long education providers should expect to receive an outcome. We will continue to monitor this area of the process to understand if further improvements in efficiency can be made. The complexity of changes in recent times has necessitated more engagement with education providers to understand the impact to standards and the most proportionate process to use to assess any changes, which is a likely factor influencing this result.

Annual monitoring process

Whilst the overall number of programmes being monitored has increased since 2013-14, the numbers have stabilised over the last three years. This is consistent with the steady number of overall approved programmes during this period. Given the increase in approved programmes this year, we can expect this to impact on annual monitoring in around two years’ time, once these programmes become eligible to engage with this process for the first time.

When we require additional documentation to be submitted

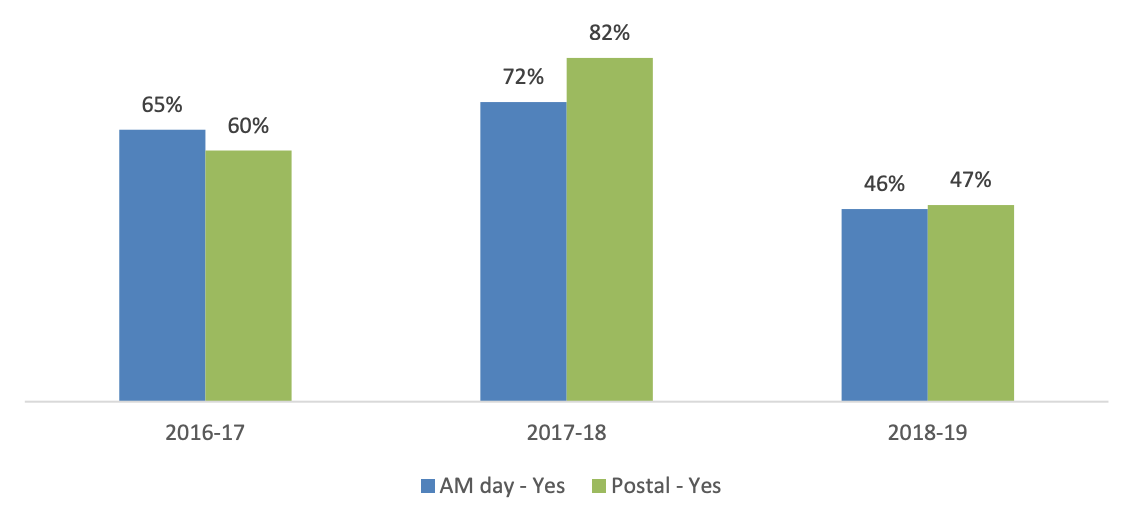

Audit submission – standards met at first attempt

Over the past three years, we have worked to address a disparity in outcomes within this annual monitoring process based on our method of assessment: assessment day versus postal assessment. We have managed to achieve consistency in this area this year in particular, following further training and guidance for both executives and visitors, and more effective back office systems to manage this process. This has been achieved in the context of assessing the revised education standards, and expanding the evidence base to include practice based learning and service user and carer monitoring information.

However, this same context has led to a lower proportion of programmes meeting our standards at their first attempt this year. We expected these challenges this year and sought to increase education provider understanding of our requirements through targeted information on our website and through webinars. We also ensured all visitors were kept up to date around these changes through online refresher sessions. We will continue these communication activities next year, and look to increase the number of providers meeting our requirements at the first attempt as a result.

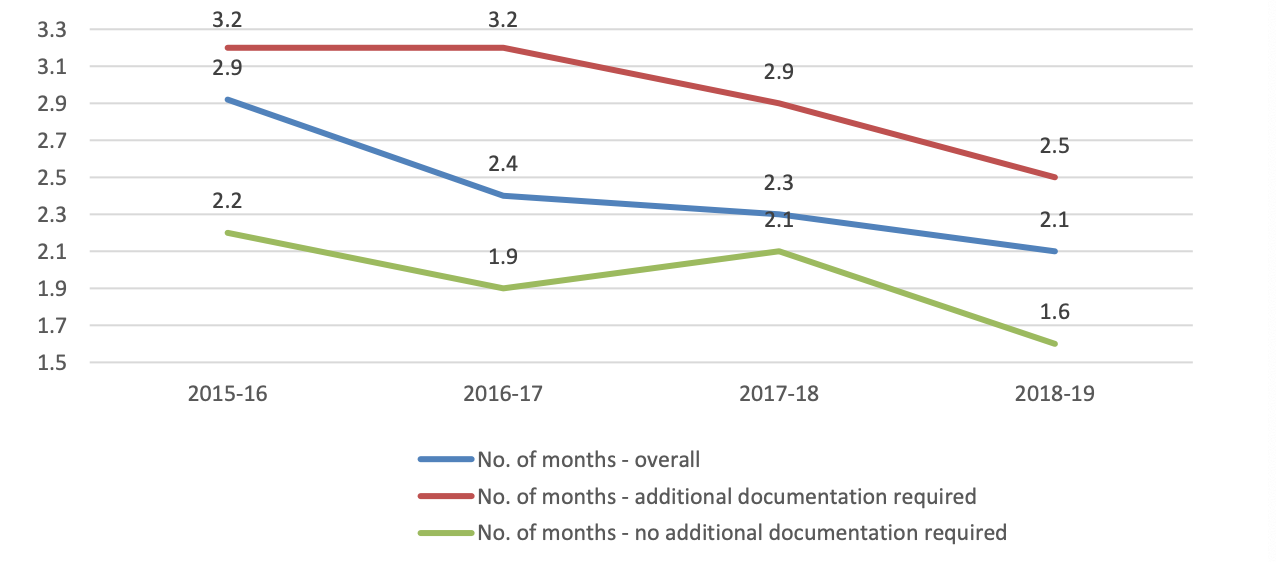

Average time taken to consider audits, compared to previous years

Pleasingly, this increase in additional documentation requests has not impacted on the overall timeliness of the audit process. We have continued to reduce the time taken to receive an outcome over the past three years. Final outcomes continue to remain in line with previous years with under 1 per cent of programmes being referred to the approval process for further assessment.

Programme concerns process

| Year | No of programmes | % of all approved programmes |

| 2014-15 | 5 | 0.5% |

| 2015-16 | 6 | 0.6% |

| 2016-17 | 9 | 0.8% |

| 2107-18 | 10 | 0.9% |

| 2018-19 | 8 | 0.7% |

The number of programmes subject to a concern being raised and investigated

continue to remain below 1 per cent.

Whilst this is the case, it is worth noting the process itself once started appears to be effective in allowing for a range of outcomes to be reached. In this period we investigated three concerns fully, with 1 requiring no further action, and two being investigated further through a directed visit. Our change in approach to seek to resolve quality assurance issues within the concerns process itself, rather than referring to another process continues to be effective.

- Published:

- 15/10/2019

- Resources

- Report, Data

- Subcategory:

- Education data